As Wired reports,

SUPERHUMAN, THE TECH company behind the writing software Grammarly, is facing a class action lawsuit over an AI tool that presented editing suggestions as if they came from established authors and academics—none of whom consented to have their names appear within the product.

As Wired also reported, the company simulated feedback on users’ written work “not just from bestselling authors and famous academics of our time, but also many who died decades ago.”

None of the “critics,” nor their estates, had given permission for this.

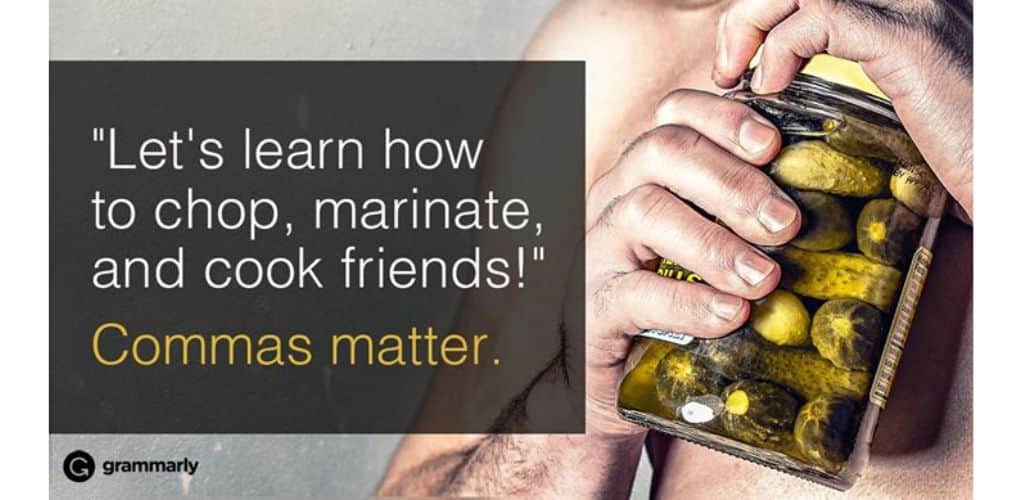

Grammarly, founded in 2009, has traditionally been used for correcting grammar, spelling, and stylistic issues in writing. But recently it’s added generative artificial intelligence (GenAI) features.

For example, as Wired reports,

There’s an AI chatbot that will answer specific questions as you compose a draft, a “paraphraser” feature that suggests changes in style, a “humanizer” that revises according to a selected voice, an AI grader that predicts how your document would score as college coursework, and even tools for flagging and tweaking phrases commonly produced by large language models.

Writers could also get tips “inspired by” the works of Stephen King, Neil deGrasse Tyson, and the late editor William Zinsser and astronomer Carl Sagan.

Presumably, the expert tool was trained on their works – a practice being challenged in many lawsuits, as we’ve previously discussed.

The company admitted that its “expert review” feature didn’t involve the active involvement of the cited experts.

(We previously discussed in this blog whether it’s IP infringement to mimic an author’s or artist’s style.)

Julia Angwin, an award-winning investigative journalist, announced that she was suing Grammarly in a New York Times op-ed.

“Like all writers,” she said, “I live by my wits.”

She continued:

My ability to earn a living rests on my ability to craft a phrase, to synthesize an idea, to make readers care about people and places they can gain access to only through words on a page. Grammarly hadn’t checked with me before using my name. I learned that an A.I. company was selling a deepfake of my mind only from an article online.

Angwin described the imitation “editor” as a “slopperganger” and said the edits suggested by her unauthorized Grammarly persona “were making the sentences worse, more complex.”

After online outrage, Grammarly disabled the GenAI feature, as the BBC reported.

As the BBC notes,

The lawsuit argues it is unlawful to use names for commercial purposes without consent and seeks to stop the platform from attributing advice to experts that they “never gave.”

The tort of false endorsement occurs when a business falsely implies a person (usually a celebrity or expert), endorses their product or service, leading to consumer confusion.

The Lanham Act, 15 U.S. Code § 1125, prohibits any “false designation of origin, false or misleading description of fact, or false or misleading representation of fact” that is “likely to cause confusion, or to cause mistake, or to deceive as to the affiliation, connection, or association of such person with another person, or as to the origin, sponsorship, or approval of his or her goods, services, or commercial activities by another person.”

The Federal Trade Commission (FTC) has determined that

It is an unfair or deceptive trade practice to make claims which represent, expressly or by implication, that a third party has endorsed a product or its performance when such third party has not in fact endorsed such product or its performance.

Such claims violate Section 5 of the Federal Trade Commission Act. The maximum civil penalty for Section 5 violations was $53,088 as of 2025.

Just like the haiku above, we like to keep our posts short and sweet. Hopefully, you found this bite-sized information helpful. If you would like more information, please do not hesitate to contact us here: https://aeonlaw.com/contact-us/.